Emotional Intelligence

Last updated: May 1, 2026

How Outset's AI-moderator recognizes and reports participants' emotions.

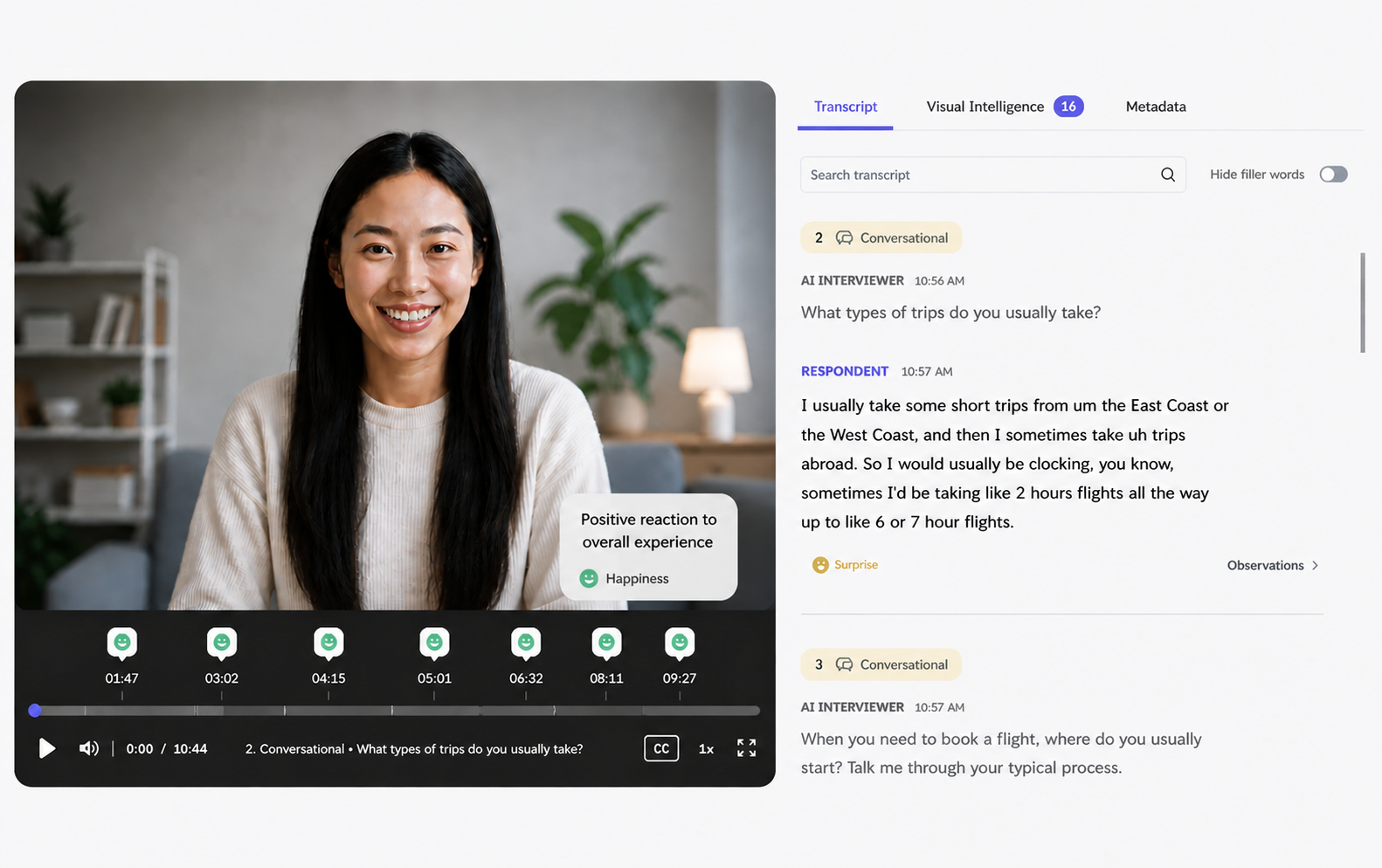

Emotional Intelligence is part of Outset's Visual Intelligence Suite. It uses AI-powered video analysis to detect and surface emotional signals during video interviews — in real time. As participants respond, the AI observes facial expressions and generates time-stamped annotations that appear in your transcript and insights, helping you understand not just what participants said, but how they felt when they said it.

This is particularly useful when emotional response is central to your research: testing a new concept, evaluating a product experience, or understanding how participants react to specific moments in a usability flow.

Part 1: How It Works

Emotion Categories

Emotional Intelligence maps observed facial expressions to seven emotional states, based on Paul Ekman's universally recognized emotion categories:

😠 Anger

😒 Disgust

😨 Fear

😊 Happiness

😢 Sadness

😲 Surprise

😐 Neutral

Each detected emotional moment is captured as a time-stamped observation that includes:

The emotion label (e.g., Happiness, Surprise)

Observable evidence in plain language (e.g., "smile appears," "brows furrowed," "gaze shifts away")

During the Interview

Emotional signals are detected and collected live while the participant is responding. However, in keeping with Outset's existing interview flow, the AI moderator will not interrupt a participant mid-answer. Any emotional probes are queued and delivered as follow-up questions after the participant has completed their current response.

⚠ Tone-of-voice and speech emotion analysis are not included in this release. Emotional Intelligence is based on facial expression and words the participant said only.

Part 2: Outputs

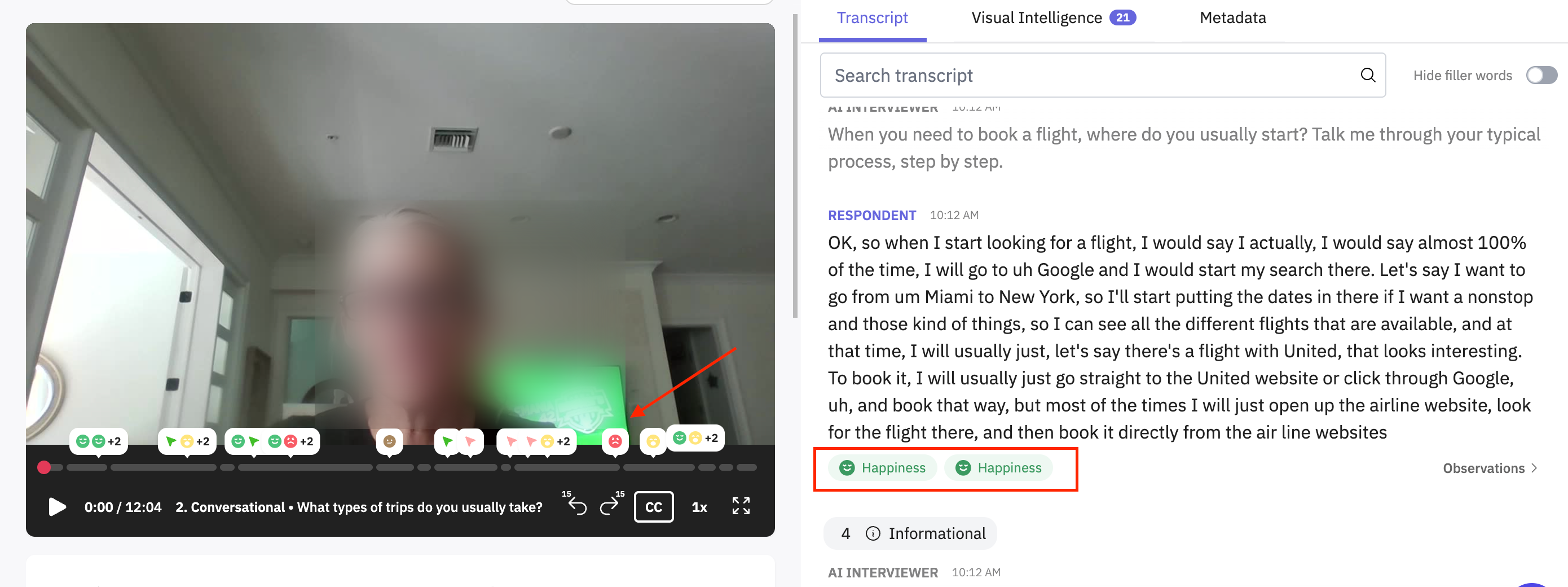

Transcript

After the interview is complete, emotional observations appear as inline annotations directly within the transcript, displayed in chronological order alongside the participant's responses. If a participant expressed multiple emotions during a single question, all relevant emotions will be shown for that question.

💡 Tip: Clicking on an emotional annotation in the transcript will take you to the exact moment in the video recording where it was detected.

Insights

On the Insights page, emotional data is surfaced at the question level:

Each question shows the dominant emotion observed across participants — determined by the highest number of observations for a single emotion

Emotional observations are sorted by chronological order and by how many participants they applied to (e.g., "8/20 participants felt 😲 Surprise during this question")

If a participant expressed multiple emotions during a single question, the most frequently observed emotion is used as the dominant signal for that participant

You can share this with your team by clicking the download icon located at the top-right corner of the chart.

Example Use Cases

FAQs

Emotional Intelligence is available for video response interviews only. Before you get started, a few things to note:

❌ Text and voice interviews ❌ Usability study types ❌ Tone-of-voice or speech emotion analysis (not included in this release)

Does Emotional Intelligence identify who a participant is or infer personal attributes? No. Emotional Intelligence is designed with strict privacy guardrails. It does not infer identity, demographics, or any other sensitive personal attributes. Every labeled emotional moment is grounded in observable facial cues only.

Can Emotional Intelligence be used alongside Digital or Physical Intelligence in the same study? Yes. When multiple intelligence types are active in the same study, the Results page will surface the top 2 observations per type, capping at 4 total per participant. All observations are included in exports.

Hope this helps! If you have any further questions, please reach out to our team at support@outset.ai or via chat.